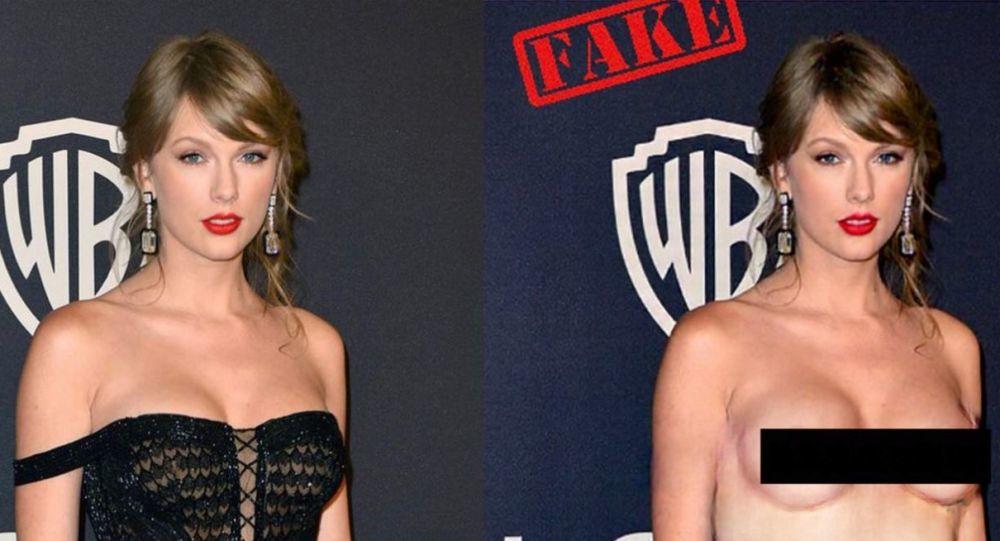

The app is based on a neural network that "removes" clothing from images of women and does not work with men. The machine-learning algorithms has been trained thousands of women’s photos to learn to swap their clothes for private parts.

Axar.az reports citing foreign media that DeepNude, the AI-powered app that rose to short-lived fame last month for its ability to turn photos of women into nudes, has been put up for auction.

The rights to the app are being advertised to investors on Flippa, a marketplace for selling and buying online businesses.

The ask price is $30.000, and it includes the official domain, the Twitter account with just under 23,000 followers, copyright, trademarks, algorithms and source codes, as well as the app itself and the datasets used to train the AI.

“DeepNude has a huge economic potential, which the founders do not have the capacity to exploit,” reads the lot description. “We are looking for someone capable of exploiting this potential and who can guarantee a professional development creating a new safe and fun app.”

The developers said the app had been hacked and modified, with illegal open-source versions appearing in the web.

“DeepNude is a tool. Like all instruments it can be used for good or for bad. We are looking for someone capable of rethinking and redesigning DeepNude as a fun tool, preventing it from being misused,” they wrote.

The website which offered would-be oglers the opportunity to download DeepNude was launched on 23 June. The app had a free version that put a large watermark on the fake naked images it produced, and a paid premium version that created uncensored images.

Users were able to crack the open-source code, effectively giving people free access to DeepNude’s premium version.

On 27 June, after increased media attention generated an unexpected flow of visitors, the website was taken down and never came back online.

The anonymous creators, who are believed to be from Estonia, explained that they were unable to handle all the traffic and didn’t want to make money from people using their brainchild maliciously.